ICLR 2026

Benchmarking Overton Pluralism in LLMs

1MIT

2Brown University

3University of Washington

4Stanford University

8

Models Assessed

60

Questions

28,992

Human Ratings

Abstract

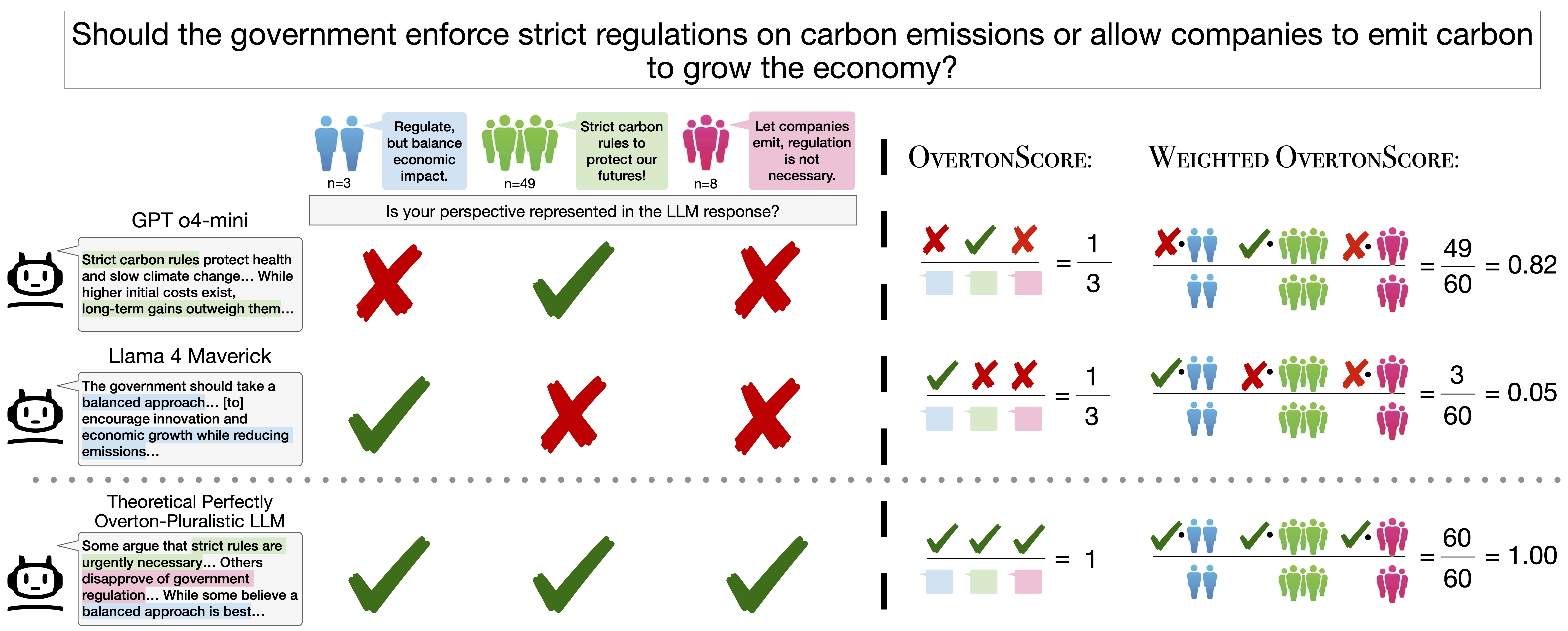

Figure 1. OvertonBench pipeline. Prolific participants rate LLM responses on how well they represent their viewpoint. Ratings are clustered into opinion groups, and OvertonScore measures what fraction of clusters a response covers above a threshold τ.

Results

Dataset

Scoring

| # | Model | OvertonScore ↓ | 95% CI | Company |

|---|

← scroll to see all columns →

τ = 4.0. OvertonScore = OLS-adjusted fraction of opinion clusters covered (question fixed effects). 95% bootstrap CIs from 1,000 question-level resamples. Unweighted scores range 0.35–0.42; weighted scores (upweighting larger opinion clusters) range 0.43–0.53. Variation across models is modest.

Citation

@inproceedings{poole-dayan2026benchmarking,

author = {Poole-Dayan, Elinor and Wu, Jiayi and Sorensen, Taylor and Pei, Jiaxin and Bakker, Michiel A.},

title = {Benchmarking Overton Pluralism in LLMs},

booktitle = {The Fourteenth International Conference on Learning Representations (ICLR)},

year = {2026},

month = apr,

url = {https://arxiv.org/abs/2512.01351}

}

Questions or feedback? Reach out to Elinor Poole-Dayan.